Simulate API Rate Limits

As the popularity of your application escalates, ensuring equal access to your software services and APIs becomes imperative. By setting up fair controls like rate limiting, you can effectively manage server load and evenly distribute resources among all users.

In this context, Rate Limiting is a strategic approach employed by system architects to regulate the number of incoming requests a server handles within a given timeframe. This strategy is critical for maintaining service quality, deterring misuse, and optimizing the allocation of server resources.

Examples

- Social Media Platforms: Platforms like Twitter limit the number of API requests to prevent spam and abuse.

- Cloud Services: AWS and Google Cloud use rate limits to manage resource usage and ensure equitable access.

- Beeceptor's Free plan limits usage to 50 requests per day.

Types

Rate limits can be implemented at multiple levels in an API infrastructure. Some of the key types of rate limits are:

- User-Level Limits: Restrict the number of requests a single user or API key can make.

- Server-Level Limits: Apply globally across all users or per IP addresses.

- Endpoint-Specific Limits: Different endpoints/paths in an API may have different rate limits.

Top 5 Key Strategies for Testing Rate-Limited APIs

Testing thoroughly rate limited APIs is essential for a robust application. Here are the top 5 aspects to focus on when testing rate-limited APIs:

1. Understand Rate Limit Policies

- Review API Docs: Check for rate limits per user, IP, or endpoint. Look for HTTP headers like

X-RateLimit-Limit,X-RateLimit-Remaining, andX-RateLimit-Reset. - Identify Thresholds: Determine the maximum number of requests allowed per second, minute, or hour.

- Check for Variations: Be aware of differences in limits for authenticated vs. unauthenticated users or tiered subscription plans.

2. Simulate Real-World Scenarios

- Mimic Traffic Patterns: A certain type of bugs surface on high load. You should test under conditions like peak loads, sudden traffic spikes, or sustained high usage.

- Vary Request Types: Use different HTTP methods (

GET,POST,PUT,DELETE) to ensure consistent handling across all endpoints. - Simulate User Behavior: Test with multiple users or IPs accessing the API simultaneously to replicate real-world usage.

3. Test Edge Cases

- Exceed Limits: Send more requests than allowed to verify that the API returns a

429 Too Many Requestsresponse. - Validate Reset Behavior: Confirm the API correctly resets rate limits after the specified time window expires.

- Test Concurrency: Check how the API handles multiple parallel requests from the same user or IP address.

4. Implement Retry Logic

- Use Exponential Backoff: Implement retries with increasing delays to avoid overwhelming the API.

- Respect Retry-After Header: Follow the API’s suggested wait time provided in the Retry-After header.

- Add Fallback Mechanisms: Use cached data or alternative workflows when retries fail to ensure a smooth user experience.

5. Use Beeceptor for Controlled Testing

- Simulate Rate Limits: Configure custom rate limits in Beeceptor to mimic real API behavior and see how the application under test behaves.

- Test Failure Scenarios: Validate how your app handles

429errors, slow responses, or intermittent failures. - Safe Testing Environment: Avoid hitting production APIs during testing by using Beeceptor’s mock endpoints.

Setting Up Rate Limits in Beeceptor

Beeceptor is a powerful tool for HTTP debugging, especially useful for simulating APIs with rate limits. This feature is crucial when

- developing and testing applications that interact with rate-limited third party services

- creating a webhook feature in your app that includes a retry mechanism. To effectively test this, you need an HTTP endpoint capable of triggering rate limit errors (HTTP 429).

Beeceptor's user-friendly, no-code setup makes this super easy. Here's how to do it:

- Create a new endpoint at Beeceptor, naming it after your project.

- Go to the 'Your Endpoints' section.

- Select 'Settings' for one of your endpoints.

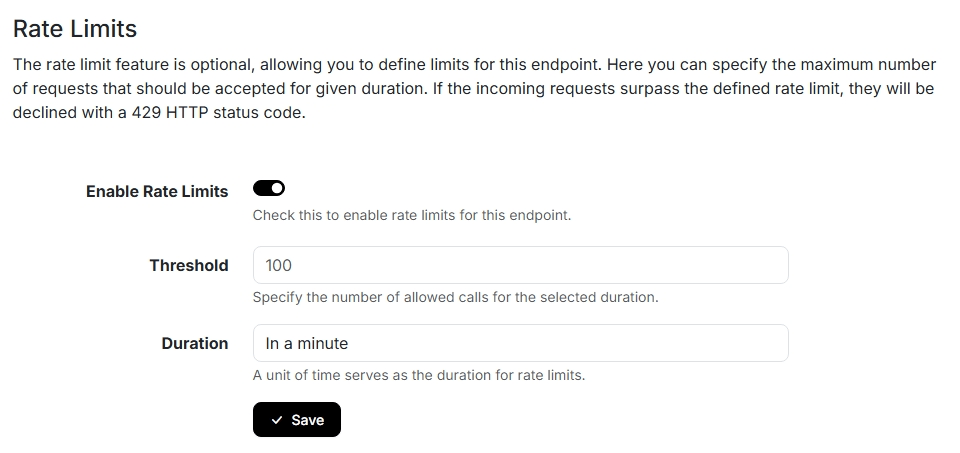

- Check the box to enable rate limits, then set the desired limit and time unit. Remember to save your changes. Below is a screenshot guiding you through these configuration steps.

Beeceptor rate limit setup: This example limits to 100 requests/minute for application testing. - Test by accessing your endpoint more times than your set limit.

- Check for the receipt of

429error responses, confirming the rate limit is in effect.

Rate Limits Standard

While there is no universally defined standard for communicating API rate limits, a widely adopted practice involves using specific HTTP headers and a status code. The common implementation includes:

- HTTP Response Status Code: The

429is commonly used to indicate rate-limited responses. - HTTP Response Headers:

X-RateLimit-Limit: Indicates the maximum number of requests that can be made in a given timeframe.X-RateLimit-Remaining: Shows the number of requests remaining in the current rate limit window.X-RateLimit-Reset: Provides the time when the rate limit will reset, typically in UNIX Epoch time.

Beeceptor adheres to these commonly accepted guidelines for its rate limiting feature. Check out the feature documentation to know about what all you can do with it.

Benefits Of Throttled Mock APIs

Having a mock API with rate limiting streamlines integration tests and enhances efficiency, catering to various essential scenarios:

- Load Testing: Imagine your application connects to a social media API. Using Beeceptor, you can mimic the API's rate limits to see how your application handles high traffic during peak hours, like during a major event or product launch.

- Retry Logic Development: Consider an eCommerce app that communicates with a payment gateway. By simulating rate limits with Beeceptor, you can fine-tune the app's retry logic for times when the gateway is overwhelmed during big sales.

- Throttling Tests: Take a weather forecasting app that fetches data frequently. Using Beeceptor, you can throttle the API response to test how the app performs when data updates are intentionally slowed down, simulating network issues or server downtimes.

- Resource Allocation Testing: In a scenario where your app aggregates data from multiple sources, Beeceptor can help you understand how your app prioritizes and manages resources when one of its data sources enforces rate limits.

- Building Distributed Consumers: Imagine a logistics app that integrates with a rate-limited tracking API. Beeceptor can simulate this API, allowing you to develop efficient strategies for your app to retry or queue requests.