Rate Limits For API Endpoints

Beeceptor hosts a powerful tool in your testing toolkit: rate limited APIs. This functionality allows you to control and limit incoming requests, providing a granular control on APIs. This article delves into the use-cases, guide on how to enable rate limits and effectively utilize for your specific needs.

This feature is available with paid plans.

Use case

Consider a scenario where you're building a queue consumer and pushing data to a third-party service. This third-party service has rate limits enabled. When a request is rate limited, your app is supposed to retry it.

To simulate and test how your consumer code behaves under rate-limited conditions, Beeceptor proves to be an invaluable tool. You can easily configure rate limits on a Beeceptor endpoint. With rate limits enabled, you can set the maximum number of requests allowed per second, minute, or hour. The time window follows a fixed window, starting from the beginning of the time unit to the end.

Besides, you should use Rate Limited mock APIs for testing retry logics. For example, if you are building an eCommerce app that communicates with a payment gateway, you can fine-tune the app's retry logic for times when the gateway is overwhelmed during big sales.

Configure Rate Limits

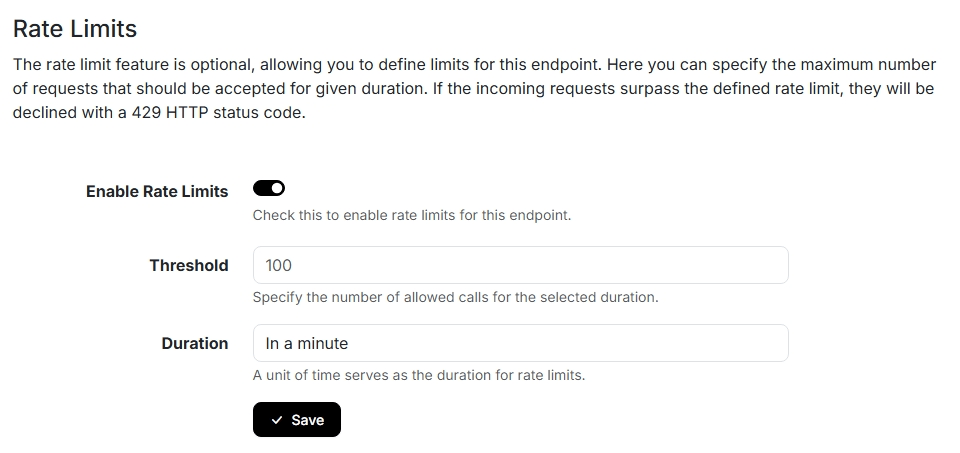

To enable rate limits, navigate to the endpoint's settings page. Refer to the following screenshot, indicating a rate limit of 100 requests per minute. You have the flexibility to select the time window for measuring the limit: a second, a minute, or an hour. The count of requests is calculated from the beginning to the end of the selected time unit, adhering to a fixed window approach for tracking the number of requests.

It's important to note that these rate limits apply globally to the entire endpoint (sub-domain), not just specific IP addresses or HTTP routes. This means, all the request paths or source IP addresses are considered under one bucket.

When you update the rate limit configurations, the quota resets immediately for that slot. The request count starts from zero for the selected period (second, minute, or hour).

Rate Limited Response

When a request exceeds the configured rate limit, the response carries a standard HTTP response code of 429. Notably, none of the mock rules are executed, as rate limits take precedence before rule execution.

Response Headers:

The response includes three additional HTTP response headers:

X-RateLimit-Limit: The maximum number of requests allowed within the set timeframe.X-RateLimit-Remaining: The number of requests remaining within the timeframe.X-RateLimit-Reset: The timestamp indicating when the rate limit will reset. This is represented in seconds from EPOCH.

Response Payload:

{

"error": {

"code": 429,

"message": "You have exceeded the rate limit for this API endpoint."

}

}

This JSON response ensures that it work for most of the integrations. In case the fixed response doesn't work for you, please reach out to the Support Team.